Emotion Detection

Contents

Emotion Detection#

This tutorial is available as an IPython notebook at malaya-speech/example/emotion.

This module is language independent, so it save to use on different languages.

This is an application of malaya-speech Pipeline, read more about malaya-speech Pipeline at malaya-speech/example/pipeline.

Dataset#

Trained on Toronto emotional speech set (TESS) with augmented noises, https://tspace.library.utoronto.ca/handle/1807/24487

[1]:

import malaya_speech

import numpy as np

from malaya_speech import Pipeline

[2]:

y, sr = malaya_speech.load('speech/video/The-Singaporean-White-Boy.wav')

len(y), sr

[2]:

(1634237, 16000)

[3]:

# just going to take 30 seconds

y = y[:sr * 30]

[4]:

import IPython.display as ipd

ipd.Audio(y, rate = sr)

[4]:

This audio extracted from https://www.youtube.com/watch?v=HylaY5e1awo&t=2s

Supported emotions#

[5]:

malaya_speech.emotion.labels

[5]:

['angry',

'disgust',

'fear',

'happy',

'sad',

'surprise',

'neutral',

'not an emotion']

List available deep model#

[6]:

malaya_speech.emotion.available_model()

INFO:root:last accuracy during training session before early stopping.

[6]:

| Size (MB) | Quantized Size (MB) | Accuracy | |

|---|---|---|---|

| vggvox-v2 | 31.1 | 7.92 | 0.9509 |

| deep-speaker | 96.9 | 24.40 | 0.9315 |

Load deep model#

def deep_model(model: str = 'vggvox-v2', quantized: bool = False, **kwargs):

"""

Load emotion detection deep model.

Parameters

----------

model : str, optional (default='vggvox-v2')

Model architecture supported. Allowed values:

* ``'vggvox-v2'`` - finetuned VGGVox V2.

* ``'deep-speaker'`` - finetuned Deep Speaker.

quantized : bool, optional (default=False)

if True, will load 8-bit quantized model.

Quantized model not necessary faster, totally depends on the machine.

Returns

-------

result : malaya_speech.supervised.classification.load function

"""

[7]:

vggvox_v2 = malaya_speech.emotion.deep_model(model = 'vggvox-v2')

deep_speaker = malaya_speech.emotion.deep_model(model = 'deep-speaker')

/Users/huseinzolkepli/Documents/tf-1.15/env/lib/python3.7/site-packages/tensorflow_core/python/client/session.py:1750: UserWarning: An interactive session is already active. This can cause out-of-memory errors in some cases. You must explicitly call `InteractiveSession.close()` to release resources held by the other session(s).

warnings.warn('An interactive session is already active. This can '

Load Quantized deep model#

To load 8-bit quantized model, simply pass quantized = True, default is False.

We can expect slightly accuracy drop from quantized model, and not necessary faster than normal 32-bit float model, totally depends on machine.

[8]:

quantized_vggvox_v2 = malaya_speech.emotion.deep_model(model = 'vggvox-v2', quantized = True)

WARNING:root:Load quantized model will cause accuracy drop.

How to classify emotions in an audio sample#

So we are going to use VAD to help us. Instead we are going to classify as a whole sample, we chunk it into multiple small samples and classify it.

[9]:

vad = malaya_speech.vad.deep_model(model = 'vggvox-v2')

[10]:

%%time

frames = list(malaya_speech.utils.generator.frames(y, 30, sr))

CPU times: user 1.85 ms, sys: 100 µs, total: 1.95 ms

Wall time: 1.98 ms

[11]:

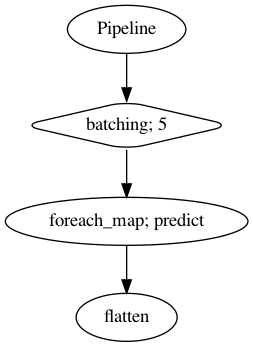

p = Pipeline()

pipeline = (

p.batching(5)

.foreach_map(vad.predict)

.flatten()

)

p.visualize()

[11]:

[12]:

%%time

result = p.emit(frames)

result.keys()

/Users/huseinzolkepli/Documents/tf-1.15/env/lib/python3.7/site-packages/librosa/core/spectrum.py:224: UserWarning: n_fft=512 is too small for input signal of length=480

n_fft, y.shape[-1]

CPU times: user 33.7 s, sys: 6.16 s, total: 39.9 s

Wall time: 10.4 s

[12]:

dict_keys(['batching', 'predict', 'flatten'])

[13]:

frames_vad = [(frame, result['flatten'][no]) for no, frame in enumerate(frames)]

grouped_vad = malaya_speech.utils.group.group_frames(frames_vad)

grouped_vad = malaya_speech.utils.group.group_frames_threshold(grouped_vad, threshold_to_stop = 0.3)

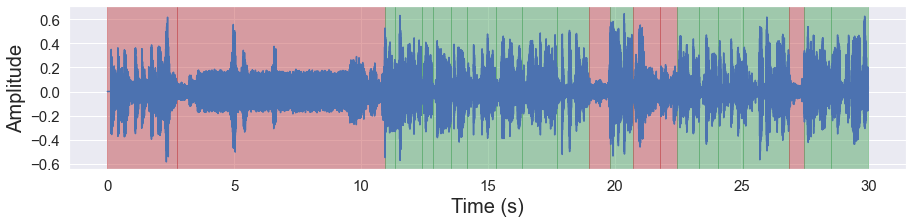

[14]:

malaya_speech.extra.visualization.visualize_vad(y, grouped_vad, sr, figsize = (15, 3))

[15]:

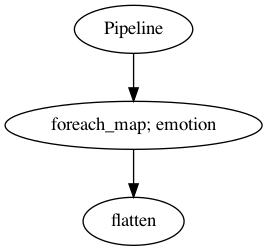

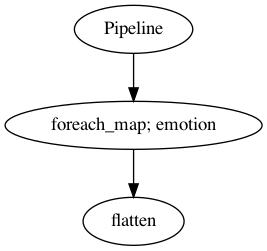

p_vggvox_v2 = Pipeline()

pipeline = (

p_vggvox_v2.foreach_map(vggvox_v2)

.flatten()

)

p_vggvox_v2.visualize()

[15]:

[16]:

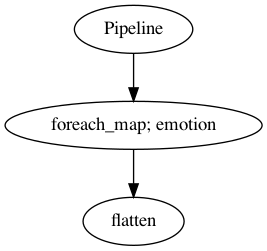

p_deep_speaker = Pipeline()

pipeline = (

p_deep_speaker.foreach_map(deep_speaker)

.flatten()

)

p_deep_speaker.visualize()

[16]:

[17]:

%%time

samples_vad = [g[0] for g in grouped_vad]

result_vggvox_v2 = p_vggvox_v2.emit(samples_vad)

result_vggvox_v2.keys()

CPU times: user 4.83 s, sys: 886 ms, total: 5.72 s

Wall time: 1.77 s

[17]:

dict_keys(['emotion', 'flatten'])

[18]:

%%time

samples_vad = [g[0] for g in grouped_vad]

result_deep_speaker = p_deep_speaker.emit(samples_vad)

result_deep_speaker.keys()

CPU times: user 5.47 s, sys: 927 ms, total: 6.39 s

Wall time: 1.97 s

[18]:

dict_keys(['emotion', 'flatten'])

[19]:

samples_vad_vggvox_v2 = [(frame, result_vggvox_v2['flatten'][no]) for no, frame in enumerate(samples_vad)]

samples_vad_vggvox_v2

[19]:

[(<malaya_speech.model.frame.Frame at 0x1489370d0>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943210>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489431d0>, 'fear'),

(<malaya_speech.model.frame.Frame at 0x148943290>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x1489432d0>, 'neutral'),

(<malaya_speech.model.frame.Frame at 0x148943350>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943390>, 'neutral'),

(<malaya_speech.model.frame.Frame at 0x1489433d0>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x148943450>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943410>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943310>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943490>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489434d0>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943510>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943550>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489435d0>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943590>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943650>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943690>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943610>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943710>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x1489436d0>, 'not an emotion')]

[20]:

samples_vad_deep_speaker = [(frame, result_deep_speaker['flatten'][no]) for no, frame in enumerate(samples_vad)]

samples_vad_deep_speaker

[20]:

[(<malaya_speech.model.frame.Frame at 0x1489370d0>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943210>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489431d0>, 'surprise'),

(<malaya_speech.model.frame.Frame at 0x148943290>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x1489432d0>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943350>, 'happy'),

(<malaya_speech.model.frame.Frame at 0x148943390>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x1489433d0>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x148943450>, 'neutral'),

(<malaya_speech.model.frame.Frame at 0x148943410>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943310>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x148943490>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489434d0>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943510>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943550>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489435d0>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943590>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943650>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943690>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943610>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943710>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x1489436d0>, 'disgust')]

[21]:

import matplotlib.pyplot as plt

[22]:

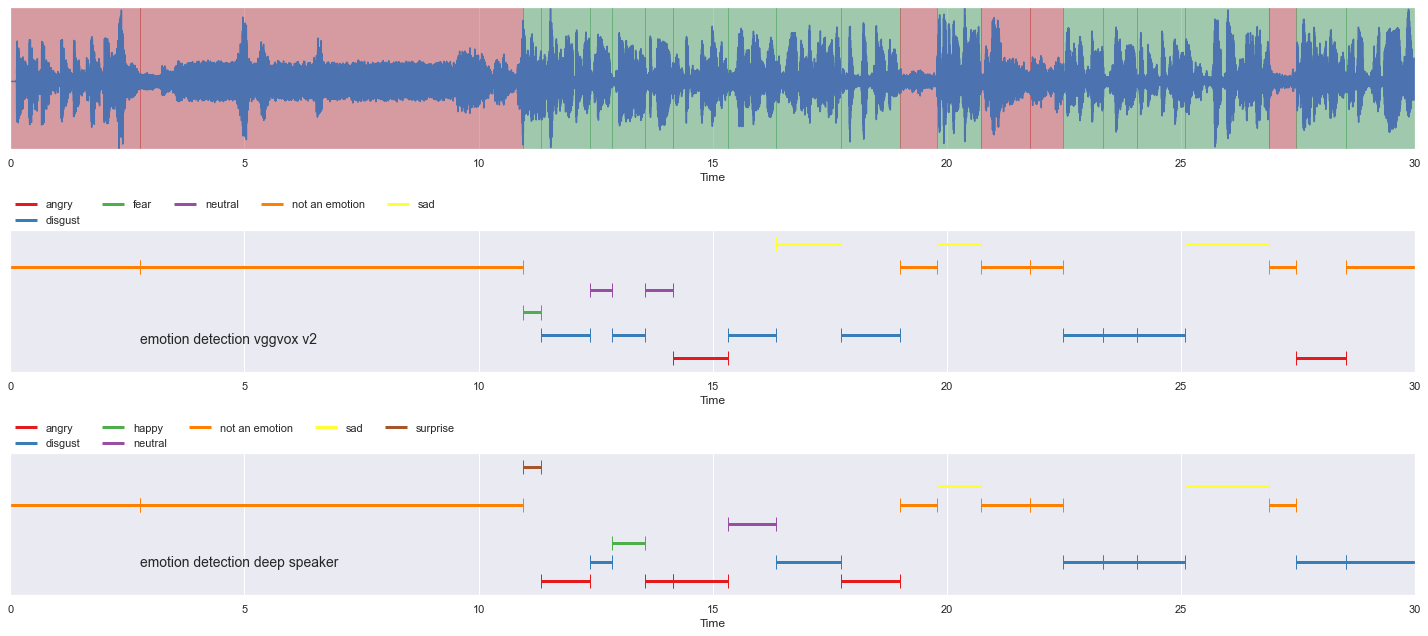

nrows = 3

fig, ax = plt.subplots(nrows = nrows, ncols = 1)

fig.set_figwidth(20)

fig.set_figheight(nrows * 3)

malaya_speech.extra.visualization.visualize_vad(y, grouped_vad, sr, ax = ax[0])

malaya_speech.extra.visualization.plot_classification(samples_vad_vggvox_v2,

'emotion detection vggvox v2', ax = ax[1])

malaya_speech.extra.visualization.plot_classification(samples_vad_deep_speaker,

'emotion detection deep speaker', ax = ax[2])

fig.tight_layout()

plt.show()

[23]:

p_quantized_vggvox_v2 = Pipeline()

pipeline = (

p_quantized_vggvox_v2.foreach_map(quantized_vggvox_v2)

.flatten()

)

p_quantized_vggvox_v2.visualize()

[23]:

[24]:

%%time

samples_vad = [g[0] for g in grouped_vad]

result_quantized_vggvox_v2 = p_quantized_vggvox_v2.emit(samples_vad)

result_quantized_vggvox_v2.keys()

CPU times: user 4.84 s, sys: 882 ms, total: 5.72 s

Wall time: 1.49 s

[24]:

dict_keys(['emotion', 'flatten'])

[25]:

samples_vad_quantized_vggvox_v2 = [(frame, result_quantized_vggvox_v2['flatten'][no]) for no, frame in enumerate(samples_vad)]

samples_vad_quantized_vggvox_v2

[25]:

[(<malaya_speech.model.frame.Frame at 0x1489370d0>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943210>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489431d0>, 'fear'),

(<malaya_speech.model.frame.Frame at 0x148943290>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x1489432d0>, 'neutral'),

(<malaya_speech.model.frame.Frame at 0x148943350>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943390>, 'neutral'),

(<malaya_speech.model.frame.Frame at 0x1489433d0>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x148943450>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943410>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943310>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943490>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489434d0>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943510>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943550>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x1489435d0>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943590>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943650>, 'disgust'),

(<malaya_speech.model.frame.Frame at 0x148943690>, 'sad'),

(<malaya_speech.model.frame.Frame at 0x148943610>, 'not an emotion'),

(<malaya_speech.model.frame.Frame at 0x148943710>, 'angry'),

(<malaya_speech.model.frame.Frame at 0x1489436d0>, 'not an emotion')]

[26]:

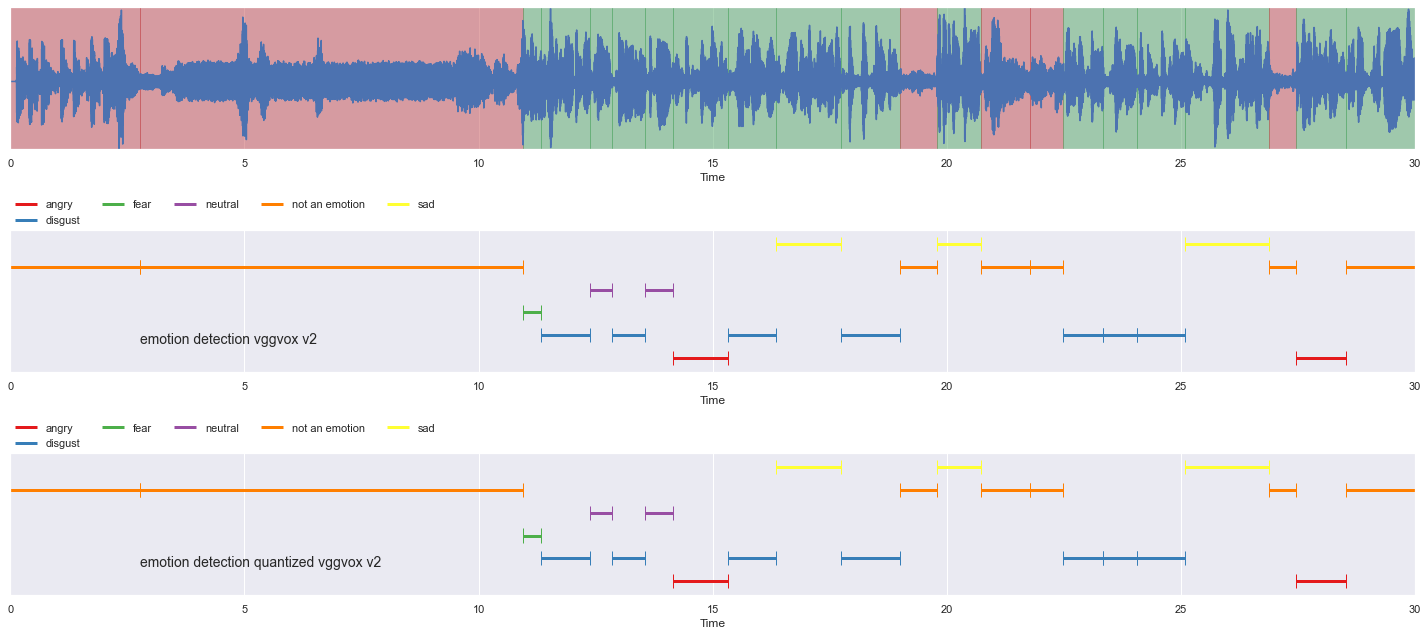

nrows = 3

fig, ax = plt.subplots(nrows = nrows, ncols = 1)

fig.set_figwidth(20)

fig.set_figheight(nrows * 3)

malaya_speech.extra.visualization.visualize_vad(y, grouped_vad, sr, ax = ax[0])

malaya_speech.extra.visualization.plot_classification(samples_vad_vggvox_v2,

'emotion detection vggvox v2', ax = ax[1])

malaya_speech.extra.visualization.plot_classification(samples_vad_quantized_vggvox_v2,

'emotion detection quantized vggvox v2', ax = ax[2])

fig.tight_layout()

plt.show()

Reference#

Toronto emotional speech set (TESS), https://tspace.library.utoronto.ca/handle/1807/24487

The Singaporean White Boy - The Shan and Rozz Show: EP7, https://www.youtube.com/watch?v=HylaY5e1awo&t=2s&ab_channel=Clicknetwork