Speech-to-Text RNNT web inference using Gradio

Contents

Speech-to-Text RNNT web inference using Gradio#

Encoder model + RNNT loss web inference using Gradio

This tutorial is available as an IPython notebook at malaya-speech/example/stt-transducer-gradio.

This module is not language independent, so it not save to use on different languages. Pretrained models trained on hyperlocal languages.

[1]:

import malaya_speech

import numpy as np

from malaya_speech import Pipeline

`pyaudio` is not available, `malaya_speech.streaming.stream` is not able to use.

[2]:

import logging

logging.basicConfig(level=logging.INFO)

List available RNNT model#

[4]:

malaya_speech.stt.transducer.available_transformer()

INFO:malaya_speech.stt:for `malay-fleur102` language, tested on FLEURS102 `ms_my` test set, https://github.com/huseinzol05/malaya-speech/tree/master/pretrained-model/prepare-stt

INFO:malaya_speech.stt:for `malay-malaya` language, tested on malaya-speech test set, https://github.com/huseinzol05/malaya-speech/tree/master/pretrained-model/prepare-stt

INFO:malaya_speech.stt:for `singlish` language, tested on IMDA malaya-speech test set, https://github.com/huseinzol05/malaya-speech/tree/master/pretrained-model/prepare-stt

[4]:

| Size (MB) | Quantized Size (MB) | malay-malaya | malay-fleur102 | Language | singlish | |

|---|---|---|---|---|---|---|

| tiny-conformer | 24.4 | 9.14 | {'WER': 0.2128108, 'CER': 0.08136871, 'WER-LM'... | {'WER': 0.2682816, 'CER': 0.13052725, 'WER-LM'... | [malay] | NaN |

| small-conformer | 49.2 | 18.1 | {'WER': 0.19853302, 'CER': 0.07449528, 'WER-LM... | {'WER': 0.23412149, 'CER': 0.1138314813, 'WER-... | [malay] | NaN |

| conformer | 125 | 37.1 | {'WER': 0.16340855635999124, 'CER': 0.05897205... | {'WER': 0.20090442596, 'CER': 0.09616901, 'WER... | [malay] | NaN |

| large-conformer | 404 | 107 | {'WER': 0.1566839, 'CER': 0.0619715, 'WER-LM':... | {'WER': 0.1711028238, 'CER': 0.077953559, 'WER... | [malay] | NaN |

| conformer-stack-2mixed | 130 | 38.5 | {'WER': 0.1889883954, 'CER': 0.0726845531, 'WE... | {'WER': 0.244836948, 'CER': 0.117409327, 'WER-... | [malay, singlish] | {'WER': 0.08535878149, 'CER': 0.0452357273822,... |

| small-conformer-singlish | 49.2 | 18.1 | NaN | NaN | [singlish] | {'WER': 0.087831, 'CER': 0.0456859, 'WER-LM': ... |

| conformer-singlish | 125 | 37.1 | NaN | NaN | [singlish] | {'WER': 0.07779246, 'CER': 0.0403616, 'WER-LM'... |

| large-conformer-singlish | 404 | 107 | NaN | NaN | [singlish] | {'WER': 0.07014733, 'CER': 0.03587201, 'WER-LM... |

Load RNNT model#

def transformer(

model: str = 'conformer',

quantized: bool = False,

**kwargs,

):

"""

Load Encoder-Transducer ASR model.

Parameters

----------

model : str, optional (default='conformer')

Check available models at `malaya_speech.stt.transducer.available_transformer()`.

quantized : bool, optional (default=False)

if True, will load 8-bit quantized model.

Quantized model not necessary faster, totally depends on the machine.

Returns

-------

result : malaya_speech.model.transducer.Transducer class

"""

[5]:

model = malaya_speech.stt.transducer.transformer(model = 'conformer')

2023-02-01 12:00:10.062604: I tensorflow/core/platform/cpu_feature_guard.cc:142] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

2023-02-01 12:00:10.177269: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.177874: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.192428: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.193014: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.193230: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.193560: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.759365: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.759985: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.760200: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.760713: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.760905: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.761296: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1510] Created device /device:GPU:0 with 12845 MB memory: -> device: 0, name: NVIDIA GeForce RTX 3090 Ti, pci bus id: 0000:01:00.0, compute capability: 8.6

2023-02-01 12:00:10.761541: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:10.761729: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1510] Created device /device:GPU:1 with 1119 MB memory: -> device: 1, name: NVIDIA GeForce RTX 3090 Ti, pci bus id: 0000:07:00.0, compute capability: 8.6

2023-02-01 12:00:20.326030: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.326701: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.327002: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.327551: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.327780: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.328210: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.328496: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.328834: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.329036: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.329358: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1510] Created device /job:localhost/replica:0/task:0/device:GPU:0 with 12845 MB memory: -> device: 0, name: NVIDIA GeForce RTX 3090 Ti, pci bus id: 0000:01:00.0, compute capability: 8.6

2023-02-01 12:00:20.329384: I tensorflow/stream_executor/cuda/cuda_gpu_executor.cc:937] successful NUMA node read from SysFS had negative value (-1), but there must be at least one NUMA node, so returning NUMA node zero

2023-02-01 12:00:20.329572: I tensorflow/core/common_runtime/gpu/gpu_device.cc:1510] Created device /job:localhost/replica:0/task:0/device:GPU:1 with 1119 MB memory: -> device: 1, name: NVIDIA GeForce RTX 3090 Ti, pci bus id: 0000:07:00.0, compute capability: 8.6

web inference using Gradio#

def gradio(self, record_mode: bool = True, **kwargs):

"""

Transcribe an input using beam decoder on Gradio interface.

Parameters

----------

record_mode: bool, optional (default=True)

if True, Gradio will use record mode, else, file upload mode.

**kwargs: keyword arguments for beam decoder and `iface.launch`.

"""

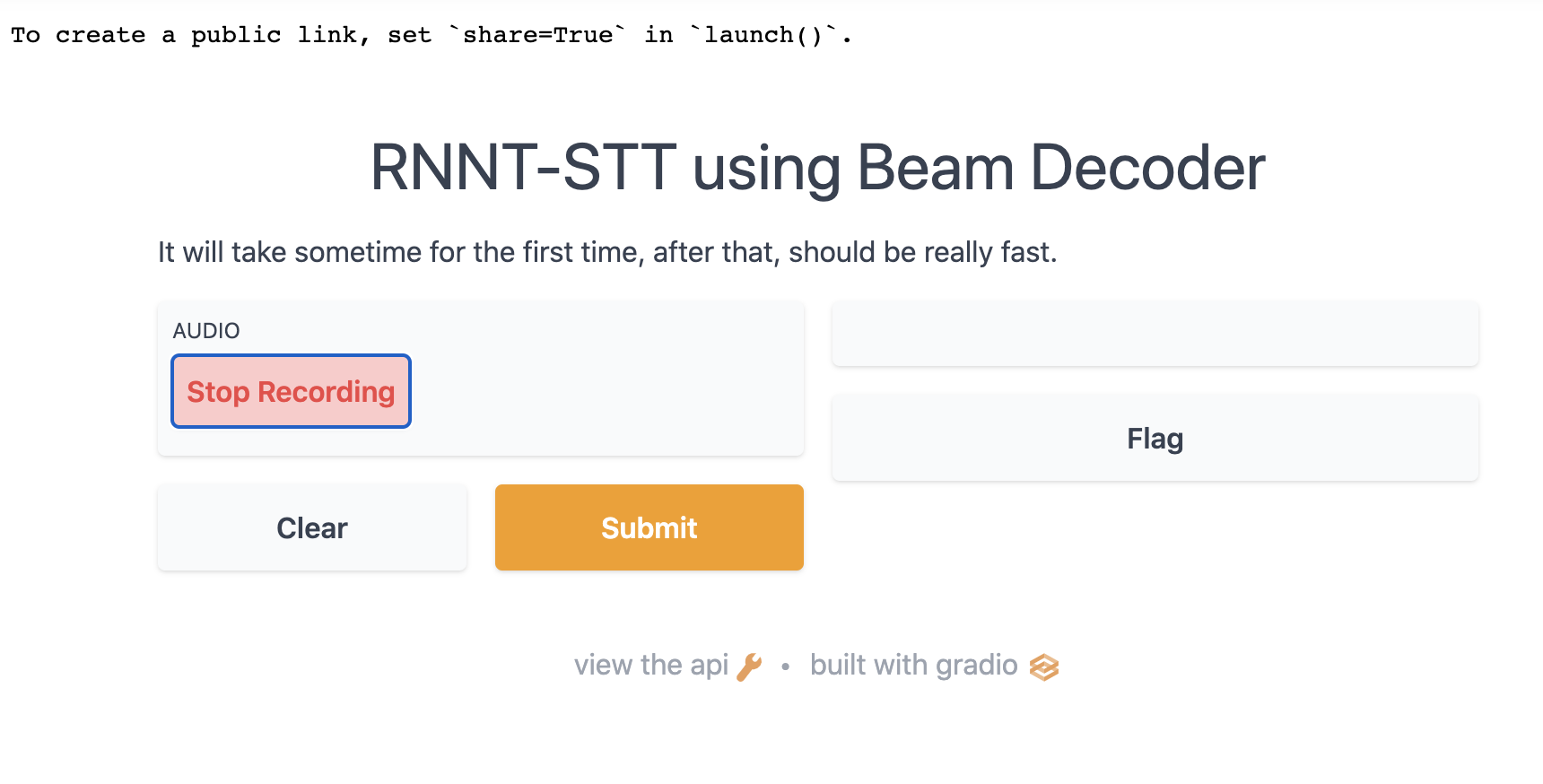

record mode#

[7]:

model.gradio(record_mode = True)

[10]:

from IPython.core.display import Image, display

display(Image('record-mode.png', width=800))

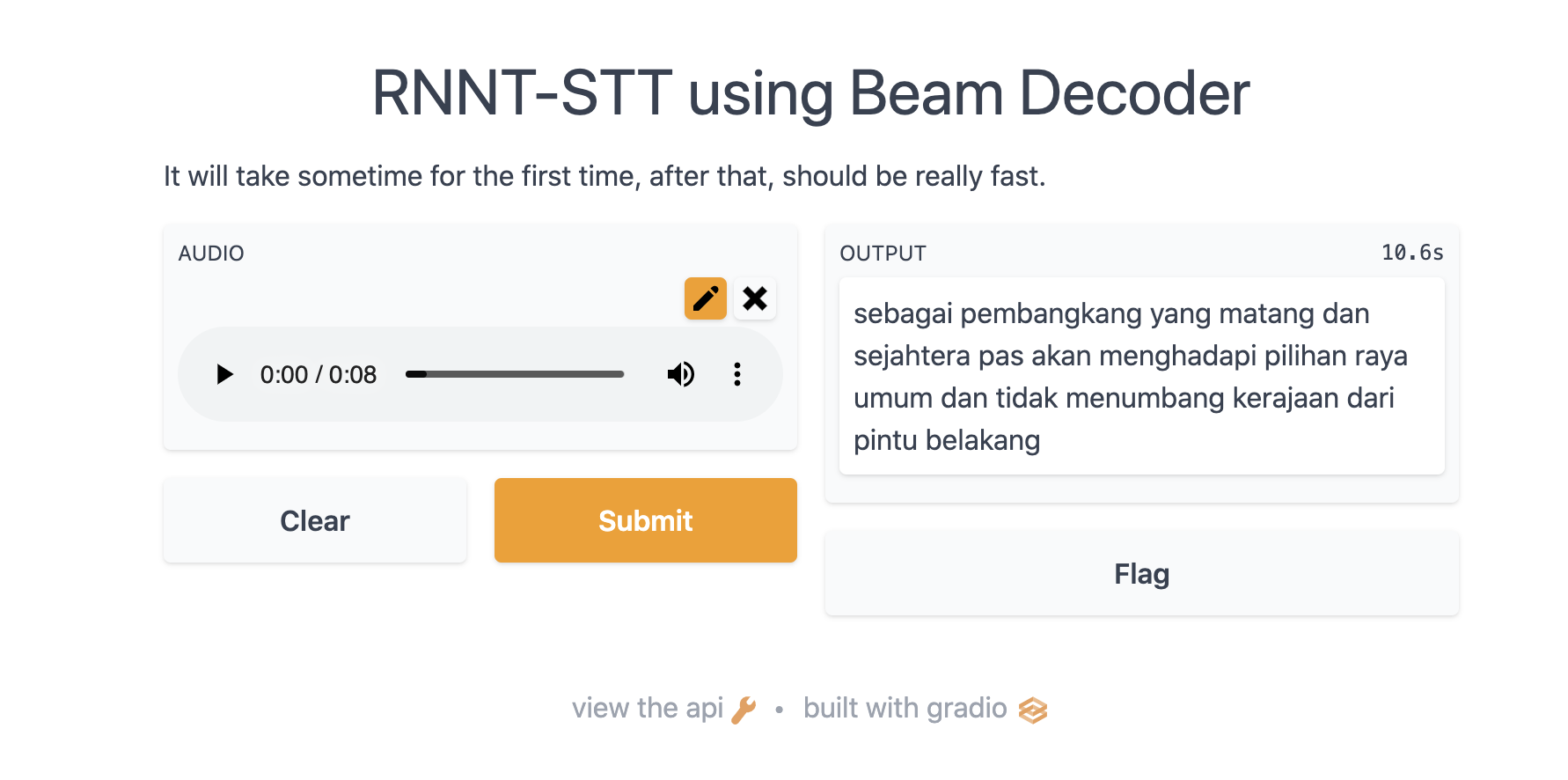

upload mode#

[9]:

model.gradio(record_mode = False)

[11]:

from IPython.core.display import Image, display

display(Image('upload-mode.png', width=800))

[ ]: